Hybrid Recommender

A PyTorch-based hybrid of content-based and collaborative filtering designed to optimize data efficiency and overcome the cold start problem.

Project-related Links:

To build an effective recommendation engine, engineers typically rely on either content-based or collaborative filtering. However, both have limitations, particularly the cold start problem, where new users with no historical interactions cannot receive personalized recommendations.

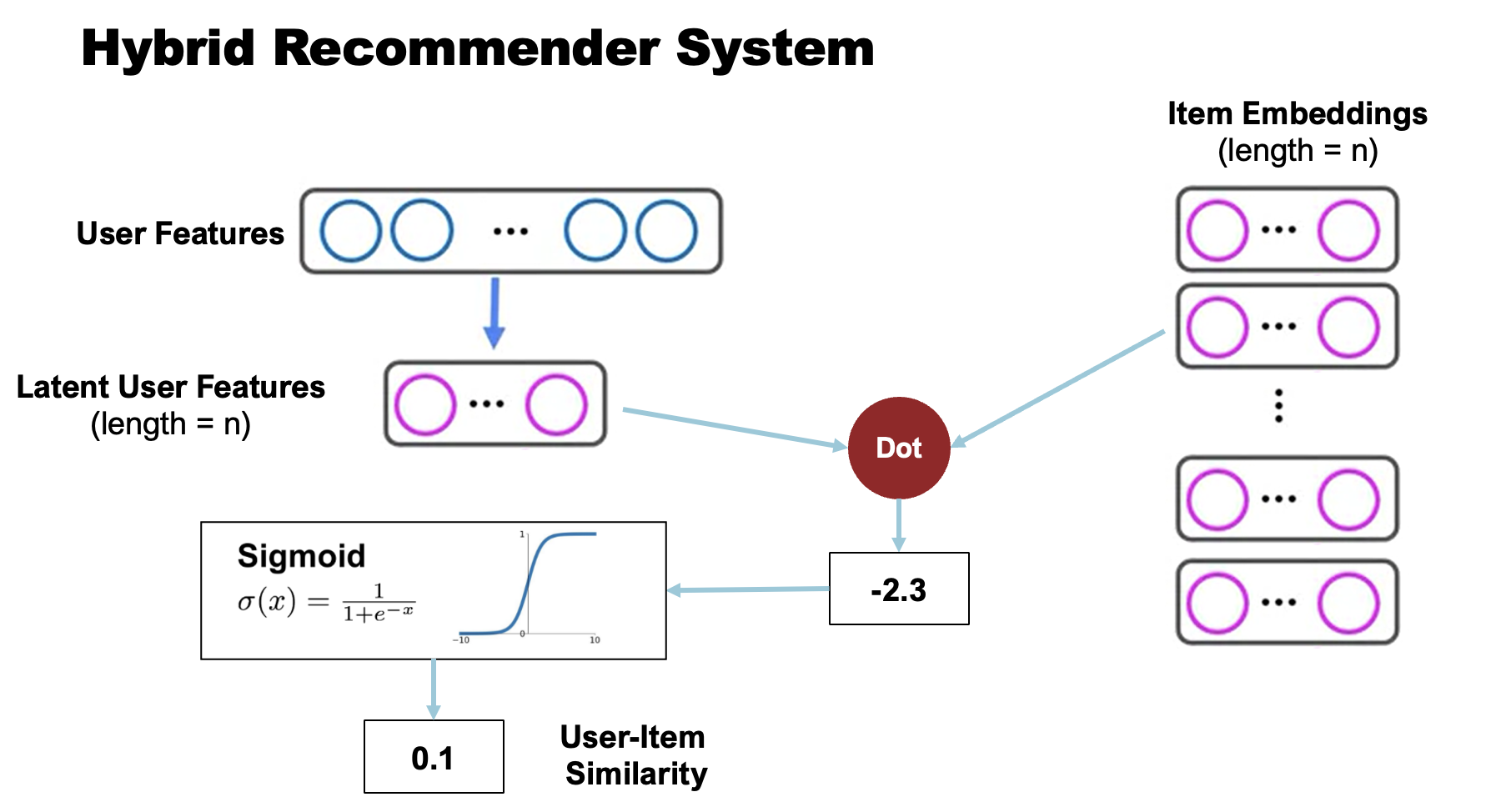

This project implements a Hybrid Recommender System in PyTorch that maps explicit user features into a latent space alongside item embeddings, bypassing the cold start problem while maintaining the serendipitous discovery of collaborative filtering.

System Architecture & Implementation

1. Data Pipeline and Negative Sampling

Handling implicit feedback requires generating negative examples so the model can learn what a user does not prefer. The custom DataLoader class manages this pipeline:

- Data Merging: It successfully joins user feature datasets with user-item interaction lists, filtering out invalid records.

- Train/Validation Split: Interactions are shuffled and split 80/20 for training and validation.

- Dynamic Negative Sampling: During the batch generation process, the pipeline dynamically generates negative samples at a 4:1 negative-to-positive ratio, ensuring robust model generalization.

2. The Hybrid PyTorch Model (CFNet)

The core of the recommender is the CFNet module, which bridges content and collaborative filtering approaches.

-

Item Embeddings: Items are represented using standard PyTorch

nn.Embeddinglayers to learn latent factors automatically. -

User Feature Mapping: Instead of learning an isolated embedding per user (which scales poorly and fails for new users), the model passes explicit user features through a linear neural network layer (

nn.Linear) to map them into the same latent space as the items. - Interaction Prediction: The model computes the dot product between the user’s mapped latent representation and the item’s embedding, passing the result through a Sigmoid activation function to output an interaction probability.

-

Optimized Inference: For deployment and fast inference, the model includes a NumPy-based matrix multiplication method (

calculate_item_recommendations) that computes full-catalog recommendations efficiently on the CPU.

3. Training and Evaluation (CFNetTrainer)

The training loop is encapsulated in a dedicated trainer class to ensure reproducible and trackable experiments:

- Optimization: The network is optimized using the Adam optimizer with a default learning rate of 0.001 and L2 regularization (weight decay of 0.1) to prevent overfitting.

-

Loss Function: The model minimizes Binary Cross Entropy Loss (

BCELoss), which is ideal for implicit feedback probability predictions. - Metrics Tracking: Real-time performance is evaluated after each epoch using three strict classification metrics: ROC-AUC, Accuracy, and Average Precision (AP).

Background: Traditional Approaches

To understand why this architecture is highly effective, it helps to review the traditional approaches it improves upon.

Content-based Filtering

Image Credit: Google Developers Tutorial

Content-based filtering relies on explicit item features and user feedback.

- Pros: It scales easily to a large number of users.

- Cons: It relies heavily on hand-engineered features, artificially capping model performance.

Collaborative Filtering

Image Credit: Google Developers Tutorial

Collaborative filtering utilizes simultaneous user-item interactions to learn latent embeddings automatically.

- Pros: It requires no domain knowledge and provides serendipitous recommendations.

- Cons: It suffers heavily from the cold-start problem when fresh items or users are introduced.

The Hybrid Solution

By replacing isolated user embeddings with a mapping network (as implemented in CFNet), the size of the mapping network does not scale with the user base. This ensures the model is lightweight, easy to train, and immediately functional for new users based purely on their starting features.